For months, the CBRE digital engineering team couldn’t close a ticket. Tenants at a global tech company’s headquarters in Washington state — a campus spanning 80-plus buildings and 50,000 occupants — were complaining about uncomfortable conditions. Freezing in the morning, overheating in the afternoon. The building automation system showed the schedules were set. The programming checked out. Equipment simply wasn’t running when it was supposed to.

The team called BMS vendors. Vendors investigated, identified programming errors, reset servers, and closed tickets. The next morning, the failures started again. This cycle repeated for months. Facilities teams were buried in help-desk escalations. The client was losing patience.

April Yi, Director of Digital Engineering at CBRE, described the experience directly: “We couldn’t permanently fix it. Traditional troubleshooting failed after months of trying. The BMS vendor would report the issue fixed, but the next day it would all start over again.”

Every logical suspect in the automation layer was investigated. Broken hardware. Failing devices. Bad programming. None of it held up. And that was the first clue that the problem wasn’t in the automation layer at all.

To understand why that matters, it helps to understand the scale and complexity of what the CBRE team was managing. The campus runs a 16,000-device BACnet network across a mixed-vendor environment — Alerton, Siemens, and Iconics systems integrated into a legacy front-end that had been built, expanded, and patched over more than a decade. In environments like this, the OT network and the automation layer are deeply interdependent, but they’re rarely monitored with equal rigor. The BMS front-end gets constant attention. The network underneath it often doesn’t — until something breaks badly enough to force the question.

Finding the BACnet Router Bottleneck

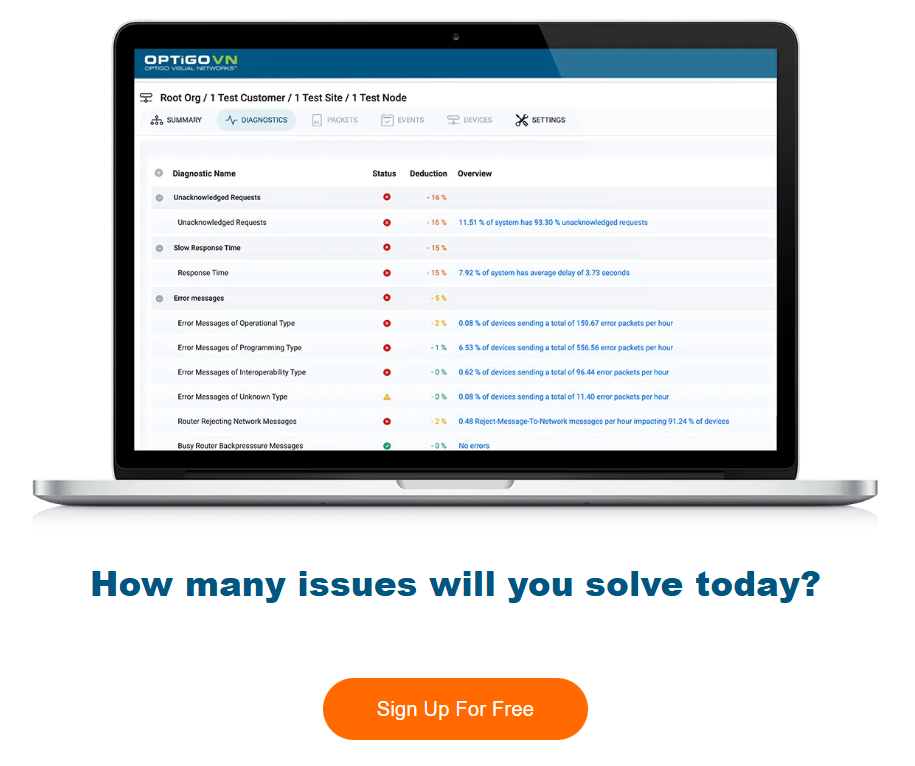

With conventional troubleshooting exhausted, the CBRE team shifted focus to the OT network layer. They deployed Optigo’s diagnostic tools to capture traffic across 20 automation servers spanning the Alerton, Siemens, and Iconics instances on the campus.

What the packet capture revealed had nothing to do with broken hardware or misconfigured programming logic. A single BACnet router — acting as a bridge between the East and West sides of the campus — was the problem. It was handling a volume of traffic it was never designed to carry.

The source of that traffic was configuration drift. Over ten years of system expansion and integration, the Iconics server had been gradually misconfigured to route a large volume of unnecessary cross-campus communication through that one point. Every time a start or stop command needed to travel across the campus, it had to pass through an overloaded router. The router responded with “Busy Router” messages — a standard BACnet response indicating it couldn’t process the request. But those messages were invisible to the BMS front-end. From the operations team’s perspective, the system looked healthy. In reality, it was quietly and consistently failing to execute commands.

This is the nature of a BACnet router bottleneck in a complex brownfield environment. It doesn’t announce itself. It accumulates gradually, masked by a front-end that has no visibility into what’s happening at the network layer. By the time the symptoms are obvious enough to trigger a formal investigation, the problem has typically been building for months or years.

Once the bottleneck was visible, the fix was architectural rather than mechanical. The team split the ten Iconics servers, dedicating five to the East campus and five to the West. That single change stopped the heavy cross-campus traffic from funneling through the overloaded router. Response times stabilized. The random failures stopped. The network Health Score moved from a critical 32% to a stable 72% immediately after the change — a strong result for a brownfield site of this scale and complexity.

The Cost of a Problem No One Can See

Resolving the bottleneck was only part of the story. The diagnostic data also made it possible to quantify what the problem had actually cost — and that analysis produced numbers that reframed the entire situation.

The BACnet router bottleneck hadn’t started in May 2024, when CBRE first opened the investigation. It traced back to at least June 2023. Over that period, 118,000 hours of systems failed to run as scheduled. Across the campus, 38% of equipment failed to turn on for more than half of their scheduled operating days.

Those numbers matter beyond the comfort complaints that triggered the investigation. Missed runtime at that scale corrupts energy data. If equipment isn’t running on schedule, the energy consumption figures recorded by the BMS don’t reflect actual building performance. Efficiency reporting becomes unreliable. Sustainability targets built on that data become suspect. The client wasn’t just dealing with uncomfortable tenants — they were operating with a fundamentally inaccurate picture of how their buildings were actually performing.

Yi framed the outcome clearly: “We were also able to balance a problem between energy efficiency management and improving our client’s comfort. It made the case for why it’s worth investing in network visibility.”

That framing is worth sitting with. The investment in OT network diagnostics didn’t just solve a comfort problem — it restored the integrity of the data the client relied on to manage energy across an 80-building campus. The case for network visibility, in this instance, was made in 118,000 hours of evidence.

For facilities teams managing large, mixed-vendor BAS environments, the CBRE case is a useful reference point. When schedules fail and the BMS reports no errors, the reflex is to keep adjusting the automation layer. But if a BACnet router bottleneck is silently dropping command packets, no amount of reprogramming resolves anything. The problem is below the automation layer, in a part of the system that traditional BMS troubleshooting tools aren’t designed to see. Closing the gap between what the front-end reports and what’s actually happening on the network is the only way to find — and permanently fix — failures like this one.

The Fix was Architechtural, Not Mechanical

Once the problem was visible, the solution was straightforward. The team split the ten Iconics servers — five dedicated to the East campus, five to the West. Cross-campus traffic stopped forcing its way through the overloaded router.

Eliminating that bottleneck moved the network Health Score from 32% to 72% immediately — a strong result for a brownfield site of this complexity.

April Yi, Director of Digital Engineering at CBREWe were also able to balance a problem between energy efficiency management and improving our client’s comfort. It made the case for why it’s worth investing in network visibility.

118,000 hours of missed runtime

The diagnostic data didn’t just solve the current problem. It also enabled a retrospective analysis that made the true cost of the issue concrete.

The root cause hadn’t started in May 2024. It had been active since at least June 2023. Over that period:

- 118,000 hours of systems did not run as scheduled

- 38% of equipment failed to turn on for more than half of their scheduled operating days

That scale of missed runtime doesn’t just affect comfort. It corrupts energy data, undermines efficiency reporting, and erodes trust in the BMS as a reliable tool.

“We were also able to balance a problem between energy efficiency management and improving our client’s comfort,” Yi said. “It made the case for why it’s worth investing in network visibility.”

What this means for complex BMS environments

The CBRE case is a useful example of a pattern that shows up across large, long-running facilities: the automation layer gets the blame for problems that originate in the network layer. When the BMS front-end shows no errors, the instinct is to keep tuning the programming. But if the network can’t reliably deliver the commands, no amount of reprogramming fixes anything.

BACnet network troubleshooting requires visibility at the packet level — not just what the BMS reports, but what’s actually moving across the network. That distinction is the difference between months of unresolved tickets and a targeted architectural fix that resolves the issue for good.

April Yi, Director of Digital Engineering at CBRE"We were also able to balance a problem between energy efficiency management and improving our client’s comfort. It made the case for why it’s worth investing in network visibility."

OptigoVN gave the CBRE team what months of conventional troubleshooting couldn’t: visibility into the network layer where the actual problem lived. One packet capture session identified a bottleneck that had been silently corrupting building performance for over a year. If your BMS looks fine but your building isn’t behaving, the answer might be in a part of your network you’ve never been able to see before. See what OptigoVN can find on your network.